Recently we did some spikes on Azure Functions from SRE (Site Reliability Engineering) perspective. The purpose was to provide our SRE team with a set of check lists and procedures to take care of deployment and maintenance of the Azure Function resources. My own task was about capacity management and scaling of the Function Apps. In this article I’m going to share my findings with you guys.

Generally speaking, in order to scale an app we need to take 3 sorts of actions:

- Prepare a list of KPI baselines that indicates we’re hitting the resource limits. For instance, the average CPU utilization exceeds 70% in a 10 minute time window.

- Monitoring KPIs (Key Performance Indicators) such as CPU, Memory, Disk, Network adaptor usage

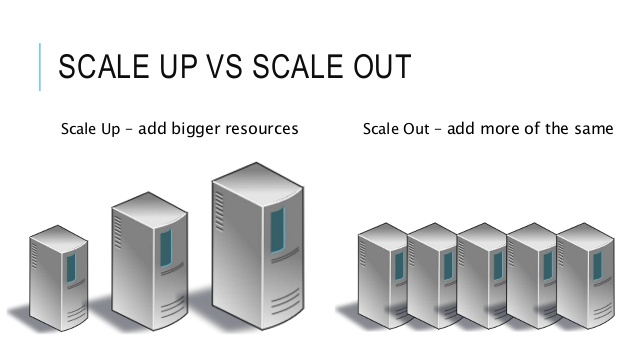

- Scale up or scale out the app once the KPIs are exceeding their thresholds. In scale up, we would increase our resource capacity, say using more RAM, or a better CPU, whereas in scale out, rather than using better resources we would replicate them in multiple instances and use something like a load-balancer to distribute the load on multiple instances of the app. This scaling up/out, would definitely add to the costs of the app, so we may need to also scale down or scale in, if the load on the app is reduced. Say you’re running an online shop, 1 month before Christmas you scale up/out to handle more traffic and after the new year you scale down/in to reduce your costs.

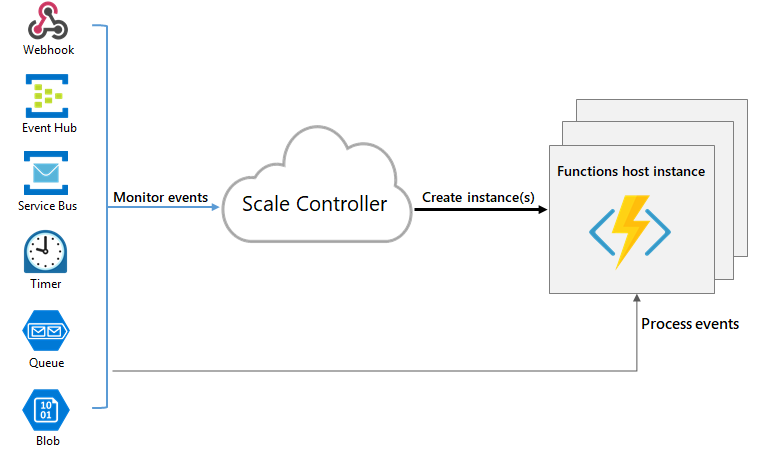

All-in-one scaling feature in Azure Functions

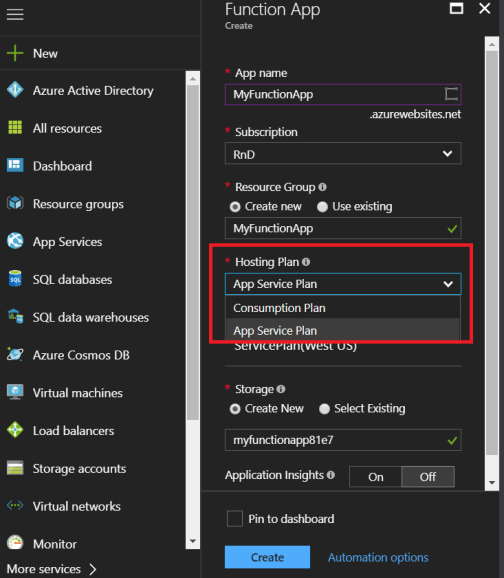

First of all we need to know about 2 different hosting plans that we can use in the Azure Function Apps:

- Consumption Plan: in this plan you will be charged base on the usage of the apps. The more Function calls in your app the more you pay.

- App Service Plan: in this plan you will run your app of a VM (Virtual Machine) and an Azure Storage Account and pay a monthly fee based on the VM/Storage capacity, regardless of how many times your functions ran.

The good news is: if you are using the Consumption Plan (which is default plan in function apps) you don’t even need to bother thinking about scaling. The platform itself , takes care of the scaling and you don’t even need to be concerned about the number of instances of the app or how much computation resources (RAM, CPU, etc.) is being used by your app. The only thing you need to do, is to pay your bills!

If you’re using the App Service Plan though, you need to take care of scaling yourself, but again the good news is: you can manually scale up your app by choosing a different pricing tier and the App Service Plan has got a cool feature that takes care of scale out and scale in, automatically.

So let’s dig into the App service plan to see how we can scale up and scale out.

How to Scale up the Function App:

As shown in the image below, at the time of creating a function app, you can specify the hosting plan (Consumption plan or App Service Plan) but once the app is created you cannot change the plan. If you want to take care of scaling yourself, pick the App Service Plan.

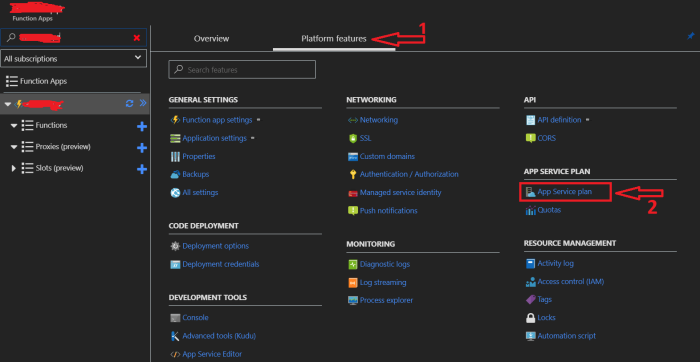

If App Service Plan is chosen, then you can find the App Service Plan option in the Function app’s Platform features tab:

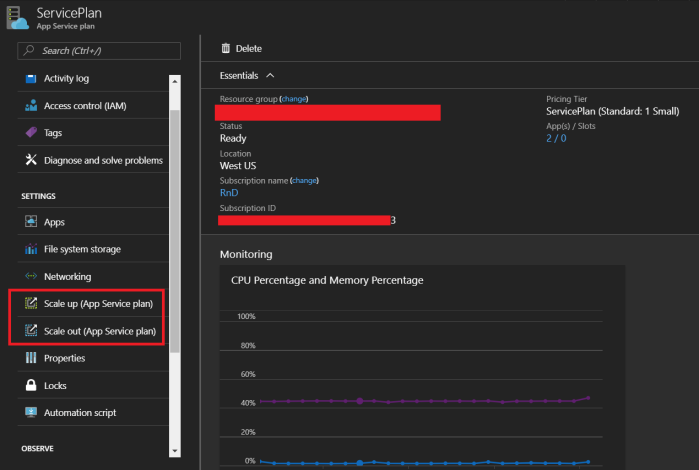

The App service plan, is an Azure Resource itself, so once you click the “App Service Plan”, it opens up the resource which looks like the following image:

As you can see scale up or scale out using the shown options. Let’s have a look into the scale up. Once we choose to scale up, a list of pricing tiers would show up.

As you see there is an estimated monthly fee and each tier has got a certain capacity and features.

Any App Service got a pricing tier, and each pricing tier supports a certain number of instances. This means that each pricing tier can support a certain amount of scale out. For Instance the P1 tier supports up to 20 instances whereas S1 tier supports up to 10 instances.

Each pricing tier could also support auto scaling or only manual scaling. As you see, the Premium and Standard tiers support auto scaling and the Basic tiers only support manual scaling.

Setup Auto Scale Out

If your pricing tier supports auto scaling, you can take advantage of this cool feature for scaling out the app.

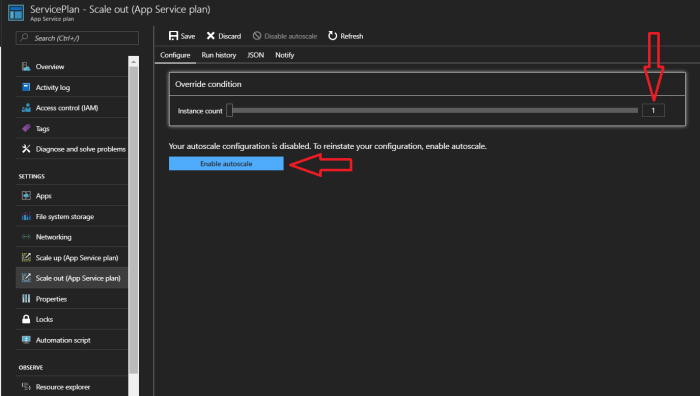

To do so, we need to get to the “Scale out” section in the App Service Plan. You can simply set the number of app instances manually or “Enable autoscale” through the button shown in the image.

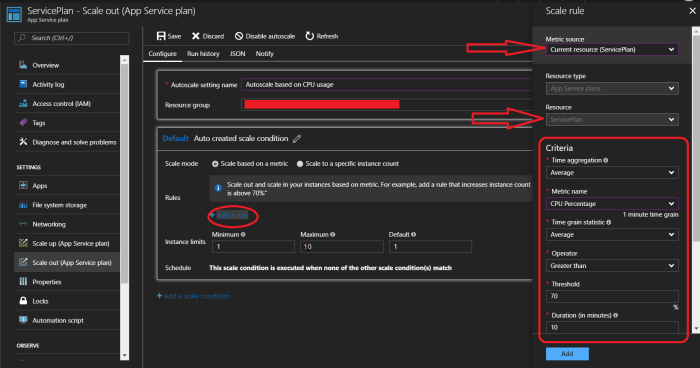

In auto scaling, we can define rules based on certain metrics. In defining rules, first of all, we need to specify the metric source, then specify the resource and finally define the criteria.

We got the following options for the metric source:

- Current Resource (ServicePlan)

- Storage queue

- Service Bus queue

- Application Insights

- Other resources

The thing is, the rule monitors a metric and scales accordingly. This metric could come from any relevant Azure Resource. In most case, the Current Resource(the function app’s ServicePlan) or the app’s Storage Account are the best metric sources, as they project the performance load of the app’s resources. If your app is submitting telemetry data into an App Insights resource, that could be a good source of metrics as well.

The Current resource, could provide metrics such as CPU/Memory/Disk/Network utilization of the current instances. The storage queue could provide metrics like number of items in the queue.

After specifying what king of metric source we want to use, we would specify the certain resource and then choose from the metrics it provides. Then based on the metrics we have, we define a condition (the criteria) and at the end, specify the proceeding action.

For example, if we want to scale out based on the CPU usage, we choose Current Resource as the metric source, and then choose the Average CPU percentage in a 10 minute time window, and if it exceeds 70% (Condition), we’d increase the number of instances by 1 (Action).

The cool thing is we can either increase or decrease the number of instances, which means we can define rules to both scale out and scale in. For instance, we could have another rule which goes: if the average CPU percentage of the instances is under 10% in a 10 min time window, then decrease the number of instances by one.

The key thing in app scaling is to have proper criteria and baselines. These criteria could be defined based on a generic baseline, but it really depends on the app architecture and the main bottlenecks of the app. It doesn’t make sense to define our auto-scaling rules based on the CPU Percentage, when the app depends on an I/O which is our app’s performance bottleneck.

I’ll try to write a separate post, to cover various Server-less app scenarios and suggest proper baselines for each scenario. Please feel free to send me a message to have a discussion about your app’s architecture, and your findings or questions with this regard.

Cool! so yeah I think that’s a wrap. In this post we discussed how to scale up/out or even scale down/in a Function App, hopefully in the future we can go through choosing proper scaling criteria according to our app.